Regulating the Future: Building Trust and Managing Risks in AI for FinTech

Written by Dharini Mohan, MSc Financial Technology (FinTech) student at UWE Bristol. She is also a part-time Service Associate at Hargreaves Lansdown.

Artificial Intelligence (AI) has emerged as a transformative force in the FinTech sector, promising to revolutionise processes, enhance customer experiences, and drive innovation. However, as AI adoption accelerates, concerns surrounding regulation, trust, and risk management have become increasingly prominent.

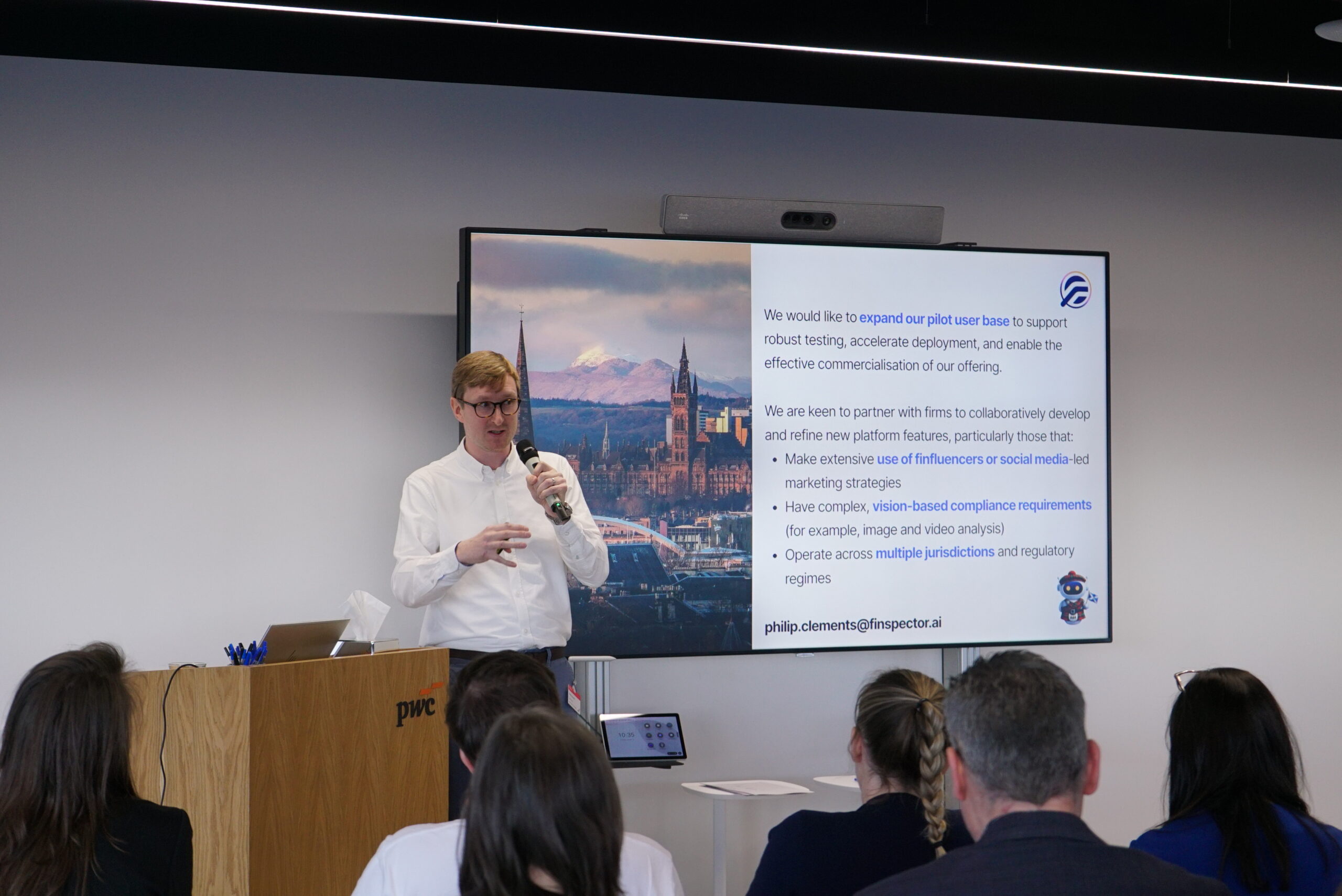

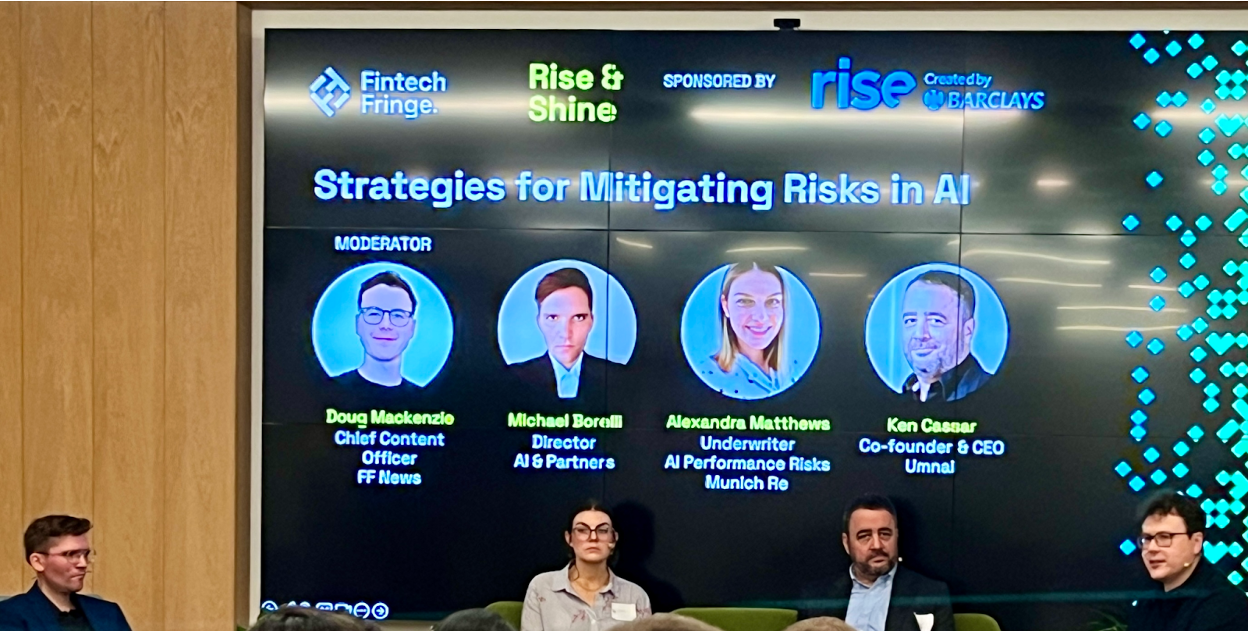

Following the Rise & Shine event organised by Fintech Fringe, sponsored by Rise (created by Barclays) earlier this month in London, the critical importance of regulating AI in FinTech, building trust among stakeholders, and effectively managing risks were thoroughly discussed among the panellists to ensure sustainable growth and innovation in AI for FinTech. Here are some noteworthy insights and strategies that were shared.

Know Your AI (KYAI), Know Your Risk

While Know Your Customer (KYC) practices have long been a cornerstone of risk management for financial institutions, the emergence of AI introduces a new dimension to this imperative. Understanding the nuances of customer profiles is crucial for accurate risk assessment, but it’s equally essential to grasp the capabilities and limitations of AI systems to effectively manage associated risks. The challenge lies in the inherent complexity and unpredictability of AI algorithms, which can introduce unforeseen risks into operations across different sectors, whether financial or intangible. Without a comprehensive understanding of AI technologies and their potential implications, organisations risk being blindsided by vulnerabilities and shortcomings in their AI systems. Therefore, embracing the concept of KYAI is essential for navigating the complexities of AI-driven services and mitigating associated risks effectively.

Never Put Customer-Facing Operations to AI

Customers often seek personalised, empathetic interactions when addressing their queries or concerns ”“ qualities that are inherently human and difficult for AI systems to authentically replicate. The recent case involving Air Canada proves the potential repercussions of relying on AI for customer-facing operations. In this instance, Air Canada’s chatbot provided incorrect information to a traveller, leading to a dispute over liability for the misinformation provided. The airline argued that its chatbot was a “separate legal entity” responsible for its own actions, but the tribunal ruled in favour of the passenger, emphasising that Air Canada ultimately bears responsibility for the accuracy of information provided through its channels, whether human or AI-driven. This scenario demonstrates the significance of maintaining human oversight and accountability in customer interactions within AI technologies.

Plot Twist: Humans Can Make AI Better

It’s about finding the right balance ”“ a little bit of this, a little bit of that. Humans are the ones who input the data, so any decision that AI provides would align with the data it possesses and serve the data’s purpose. Human-based controls are crucial, and it’s up to the organisation to determine how they wish to establish regulations and understand their responsibilities based on their clients’ needs. The integration of Human-in-the-Loop (HITL) is brilliant as it allows humans to be involved in both the training and testing stages of building an algorithm, enabling real-time data control and contributing to the development of a dynamic risk profile. Having more controls on how the model handles data inputs, where the data is sourced, and how it’s divided for training and testing is essential to measure deviations effectively.

It is (Mathematically) Impossible to Eliminate All Discrimination and Bias

Given the impossibility of eliminating all discrimination and bias, organisations must carefully choose the biases inherent in their AI systems. Questions arise regarding the origins of Generative AI, particularly ChatGPT by OpenAI, with concerns raised over its development in a research lab in San Francisco. The training data, sourced from non-diversified datasets, presents a significant challenge, reflecting a limited cultural context and accentuating the necessity for a challenger model to address these gaps. For instance, you may not find sufficient information for certain countries, and that may potentially portray discrimination, but that is just the data the model was trained on. Despite undergoing rigorous training, AI is not infallible and is prone to errors. This highlights the prominence of continuous refinement and validation processes. Additionally, the intrinsic need for human oversight persists, as diversity never takes care of itself within AI systems. Synthetic datasets offer a solution to address the shortfall in training data, incorporating real-world data to provide comprehensive coverage and mitigate biases.

Key Strategies to Mitigate Risks: 1) Identifying 2) Classifying 3) Mapping Out

In navigating AI risks effectively, truly articulating an organisation’s specific risks serves as the foundational step in risk management, complemented by due diligence and a comprehensive understanding of AI deployment. It’s essential to consider whether to develop an in-house AI stack or outsource it, as well as implementation of post-deployment controls for ongoing training and maintenance of AI systems. Risk classification is key, alongside crucial actions such as fortifying cybersecurity measures, protecting data privacy, and monitoring third-party involvement, all while addressing opacity risks and setting risk-based priorities. FinTech ventures must carefully consider their product element alongside regulatory compliance. With the EU AI Act mandating the establishment of a risk management system for high-risk AI systems, it surely helps organisations stay up-to-date and compliant.

Conclusion

As AI continues to reshape the FinTech landscape, the importance of regulating AI, fostering trust, and managing risks cannot be emphasised enough. Regulatory frameworks must evolve to keep pace with technological advancements, ensuring responsible and ethical deployment of AI. It’s essential to acknowledge that AI literacy is just as vital as financial literacy, enabling the FinTech industry to fully leverage AI’s potential while navigating its inherent complexities and uncertainties.

Risk management is not merely a matter of ticking boxes; it requires continuous vigilance and adaptability.